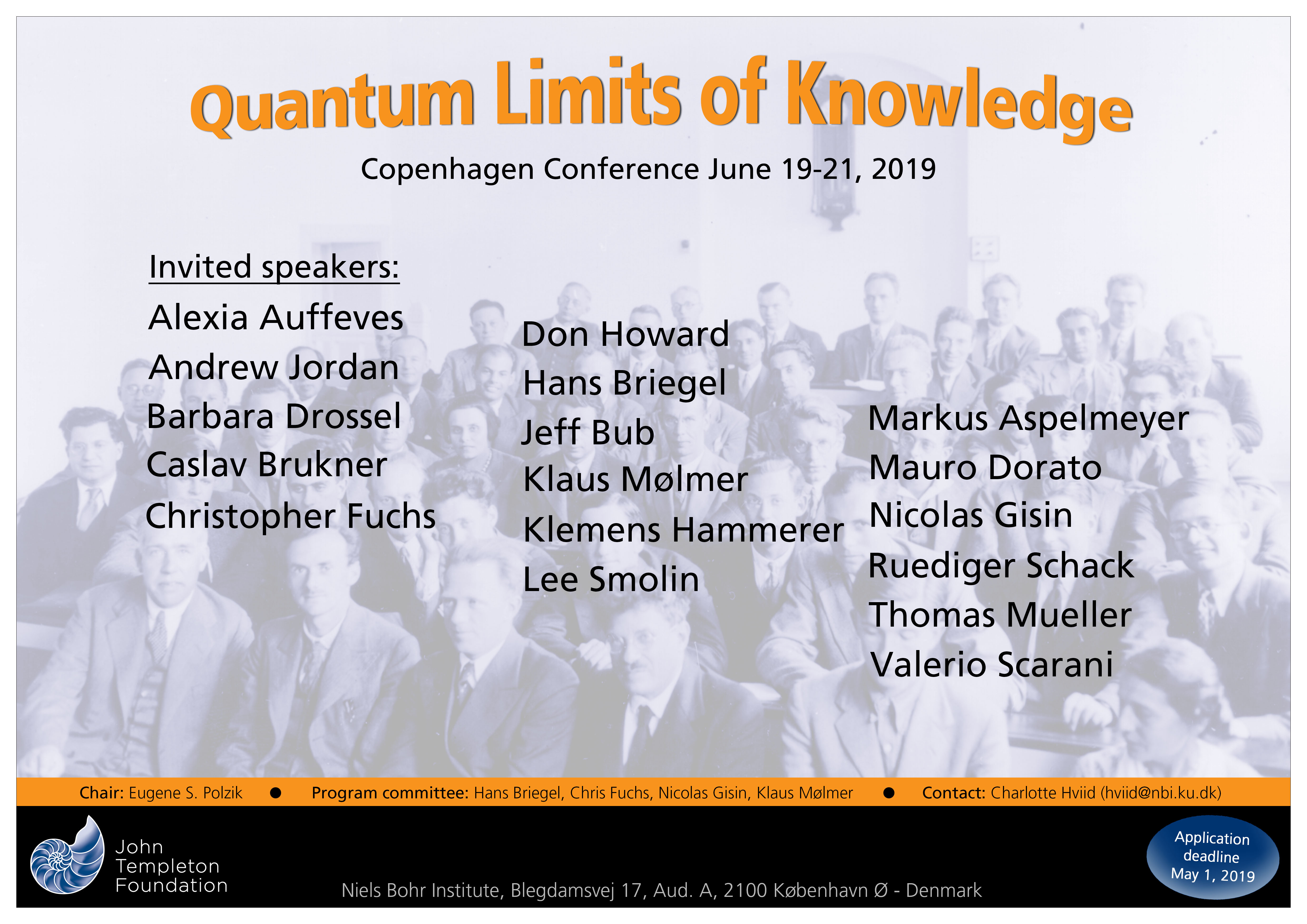

Quantum Limits of Knowledge

Aud A

Blegdamsvej 17

-

-

09:00

→

09:10

Welcome 10m Aud A

Aud A

Blegdamsvej 17

2100 Copenhagen Ø Denmark -

09:10

→

11:10

Quantum and/or Classical Worlds? Aud A

Aud A

Blegdamsvej 17

2100 Copenhagen Ø DenmarkConvener: Klaus Molmer (University off Aarhus)-

09:10

There is no quantum world 40m

The theory of relativity is about the explanatory framework of physics — a ‘principle’ theory, to use Einstein’s term, by contrast with ‘constructive' theories. Specifically, we were wrong about the structure of space and time. Quantum mechanics is also a ‘principle’ theory, about a different feature of this framework: we were wrong about commutativity, more specifically about Booleanity, what George Boole characterized as 'the conditions of possible experience.' I will elaborate on the significance of non-Booleanity as it relates to the 'quantum limits of knowledge’ theme of the conference and to the title, which is a quote from Aage Petersen’s article 'The philosophy of Niels Bohr' in Bulletin of the Atomic Scientists 19, 8–14 (1963): 'When asked whether the algorithm of quantum mechanics could be considered as somehow mirroring an underlying quantum world, Bohr would answer, "There is no quantum world. There is only an abstract quantum physical description. It is wrong to think that the task of physics is to find out how nature is. Physics concerns what we can say about nature."'

Speaker: Jeffrey Bub (University of Maryland) -

09:50

Indeterminism in quantum and classical physics: the future is open 40m

Quantum physics is usually presented as non-deterministic. This can be proven assuming no instantaneous influences at a distance and the existence of independent systems. However, quantum theory can be supplemented by additional variables (e.g. Bohmian particles) that turn the extended theory deterministic, though these additional variables are necessarily inaccessible. Classical physics is usually presented as deterministic. This is the consequence of deterministic evolution equations and the use of real numbers to describe initial conditions. The use of real numbers is very convenient, but is an assumption. Typical real numbers contain an infinite amount of (Shannon) information. An alternative classical mechanics based on finite information quantities is empirically equivalent to classical mechanics. However, for chaotic classical dynamical systems, this alternative classical mechanics is intrinsically indeterministic. Hence, the huge empirical successes and enormous explanatory power of classical mechanics does not imply determinism. Actually, if one likes to avoid infinities, then indeterminism is the more natural view also for classical physics. Though, here also, one may supplement the theory by adding inaccessible variables, e.g. the usual real numbers.

Indeterminism implies that the future is open. A view much closer to the way we experience the world. It implies, among others, that when time passes, new information is created, instead of information coded in inaccessible initial conditions gaining relevance. In both cases, knowledge about the future is intrinsically limited.Speaker: Nicolas Gisin (University of Geneva) -

10:30

What is quantum in quantum randomness? 40m

It is often said that quantum and classical randomness are of different nature, the former being ontological

and the latter epistemological. However, so far the question of "What is quantum in quantum

randomness", i.e. what is the impact of quantization and discreteness on the nature of randomness, remains to answer. In this talk I will first explicit the differences between quantum and classical randomness within a recently proposed ontology for quantum mechanics based on contextual objectivity. In this view, quantum randomness is the result of contextuality and quantization. I will show that this approach strongly impacts the purposes of quantum theory as well as its areas of application. In particular, it challenges current programs inspired by classical reductionism, aiming at the emergence of the classical world from a large number of quantum systems. In a second part, I will analyze quantum physics and thermodynamics as theories of randomness, unveiling their mutual influences. I will finally consider new technological applications of quantum randomness opened in the emerging field of quantum thermodynamics.Speaker: Alexia Auffeves (CNRS)

-

09:10

-

11:10

→

11:40

Coffee Break 30m NBI Canteen

NBI Canteen

-

11:40

→

13:00

Quantum Bayesianism and Copenhagen Interpretation Aud A

Aud A

Blegdamsvej 17

2100 Copenhagen Ø DenmarkConvener: Hans Briegel (University of Innsbruck)-

11:40

Notwithstanding Bohr, Three Tenets of QBism 40m

Without Niels Bohr, QBism would be nothing. However in this talk, I try my best to explain how QBism is no minor tweak to Bohr's view on quantum mechanics. Along the way, I introduce three tenets of QBism: 1) All probabilities, including all quantum probabilities, are so subjective they never tell nature what to do. This includes probability-1 assignments. Quantum states thus have no “ontic hold” on the world. 2) The Born Rule – the foundation of what quantum theory means for QBism – is a normative statement. It is about the decision-making behavior any individual agent should strive for; it is not a descriptive “law of nature” in the usual sense. 3) Quantum measurement outcomes just are personal experiences for the agent gambling upon them. Particularly, quantum measurement outcomes are not, to paraphrase Bohr, “instances of irreversible amplification in devices whose design is communicable in common language suitably refined by the terminology of classical physics.” Finally, I discuss how Bohr's notion of “phenomena” remains a guiding light to QBism in its endeavor to identify an ontology compelling the use of quantum theory to decision making agents: As best we can see, it has to do with the taking account of a world in which, as William James put it, “new being comes in local spots and patches.”

Speaker: Christopher Fuchs (University of Massachusetts Boston) -

12:20

Quantum jumps, quantum trajectories and the Copenhagen interpretation 40m

From the early days of quantum mechanics, leading physicists were concerned about the role played by probabilities, the discontinuous nature of quantum jumps and the collapse of the state of quantum systems subject to measurement. While the evolution of a single localized quantum system already raises the most fundamental questions about the meaning of the theory, correlation measurements on entangled states of several particles made it possible to address these questions and put different interpretations to test in a quantitative manner.

In this talk, I shall consider continuous or repeated measurements on a single quantum system. A recent extension of the quantum state formalism describes what is known by an observer about a system at time t, if she has monitored the system over times both before and after t. This extension draws attention to questions that were not considered in detail in the past, and I shall address how they relate to and illuminate conceptual ideas and quantitative aspects of the Copenhagen (and QuBism) interpretation.Speaker: Klaus Molmer (University off Aarhus)

-

11:40

-

13:00

→

14:00

Lunch 1h NBI Canteen

NBI Canteen

-

14:00

→

15:20

Time, Energy and Work Aud A

Aud A

Blegdamsvej 17

2100 Copenhagen Ø DenmarkConvener: Christopher Fuchs (University of Massachusetts Boston)-

14:00

Time and causation within a realist completion of quantum mechanics 40m

I report on a programme of research aimed at constructing a realist completion of quantum mechanics. These are based on an energetic causal set ontology within which time (in the sense of causation), energy and momentum are assumed real and fundamental, while spacetime and its dynamics as well as quantum mechanics emerge in appropriate limits. These comprise papers on energetic causal sets, with Marina Cortes, as well as papers on the real ensemble formulation of quantum mechanics and, most recently, the causal theory of views.

The relevant papers are summarized and linked here: https://leesmolin.com/einsteins-unfinished-revolution/related-scientific-papers/Speaker: Lee Smolin (Perimeter Institute) -

14:40

Spooky work at a distance 40m

The quantum measurement process is usually discussed in terms of the knowledge of the observer, or in terms of information available about the quantum system. In my talk, I will discuss how the quantum measurement may also be viewed as stochastic energy source, where energy can be transferred from the meter to the system with near unit efficiency in order to do useful work. Further, I will also show that this measurement-generated work can be accomplished in systems where the meter seemingly never interacts with the system, as in the case of interaction-free measurements.

[1] Cyril Elouard, Andrew N. Jordan, Efficient Quantum Measurement Engines, Phys. Rev. Lett. 120, 260601 (2018),

[2] Cyril Elouard, Mordecai Waegell, Benjamin Huard, Andrew N. Jordan, in preparationSpeaker: Andrew N. Jordan (University of Rochester)

-

14:00

-

15:20

→

15:50

Coffee Break 30m NBI Canteen

NBI Canteen

-

15:50

→

16:50

Niels Bohr Archive Tour 1h

Meeting point at the reception

-

19:30

→

20:50

Conference dinner 1h 20m Restaurant SALT

Restaurant SALT

-

09:00

→

09:10

-

-

09:00

→

10:20

Quantum Bayesianism and Copenhagen Interpretation Aud A

Aud A

Blegdamsvej 17

2100 Copenhagen Ø DenmarkConvener: Nicolas Gisin (University of Geneva)-

09:00

Artificial agency and quantum experiment 40m

In the quantum Bayesian formulation of quantum mechanics (QBism), the notion of an agent plays a central role. It is however a primitive notion that remains unresolved within the theory. If one entertains the possibility of automated quantum experiments designed and run by artificial intelligence, the problem of artificial agency gets into the focus. In the talk I will discuss the role and scope of artificial agency and AI for explorative experiment and for quantum foundations.

Speaker: Hans Briegel (University of Innsbruck) -

09:40

Why quantum states represent belief rather than knowledge 40m

The title of this conference, "quantum limits of knowledge", expresses the common view that quantum mechanics imposes restrictions on the knowledge we can have about a physical system. QBism challenges this view by taking quantum states to represent states of belief rather than states of knowledge. This talk explains how this shift in language allows QBists to view the Born rule as an addition to --

and not a restriction of -- probability theory.Speaker: Ruediger Schack (Royal Holloway University of London)

-

09:00

-

10:20

→

10:50

Coffee Break 30m NBI Canteen

NBI Canteen

-

10:50

→

12:10

Reference Frames, Relativity and Gravity in QM Aud A

Aud A

Blegdamsvej 17

2100 Copenhagen Ø DenmarkConvener: Ruediger Schack (Royal Holloway University of London)-

10:50

How the world look like for a quantum particle? 40m

The covariance of physical laws in quantum reference frames

In our laboratories, we perform experiments with single quantum particles which can be in a superposition of different states or entangled with other particles, showing features which are strikingly different to the classical world. What would be the description of the world, if we could "sit" on a particle that is in a superposition state with respect to the laboratory frame of reference? The relational approach to physics suggests that the description from such a "quantum reference frame" is exactly the opposite: while for itself, the particle appears classical, it is the laboratory that is in a quantum superposition of states. In the talk, I will introduce a quantum theory framework for an observer that is "attached" to a quantum particle. I will show that that although the features of observed systems - such as entanglement and superposition - are observer-dependent, the physical laws themselves remain the same for all observers (i.e. are invariant under the transformation between quantum reference frames). Finally, I will conclude with the insights the new framework provides for understanding Wigner's friend thought experiment.

Speaker: Caslav Brukner (University of Vienna) -

11:30

Object-Observer Entanglement and Back-Action Evasion in Continuous Positions Measurements 40m

At a fundamental level Quantum mechanics dictates that a measurement corresponds to a physical process generating entanglement among the measured object and the measurement apparatus (and possibly the rest of the world). This entanglement inevitably results in a disturbance of the measured system - the famous Measurement Back Action in Quantum Mechanics. Measurement Back Action may become the main factor limiting the sensitivity in repeated or continuous measurements, as is the case in laser-interferometric postion sensors such as e.g. in LIGO. I will show how Object-Observer Entanglement and Measurement Back Action - as well as Back Action Evasion - can be demonstrated and tested in table-top optomechanical position sensors. Back Action Evasion can be achieved by measuring position with respect to an engineered reference frame corresponding to a negative mass harmonic oscillator, as demonstrated by C.B. Møller, et al.

Ref: C.B. Møller, R.A. Thomas, G. Vasilakis, E. Zeuthen, Y. Tsaturyan, K. Jensen, A. Schliesser, K. Hammerer, E.S. Polzik: Quantum back-action-evading measurement of motion in a negative mass reference frame, Nature 547, 191–195 (2017)

Speaker: Klemens Hammerer (University of Hannover)

-

10:50

-

12:10

→

13:00

Round Table Discussion 50m

-

13:00

→

14:00

Lunch 1h NBI Canteen

NBI Canteen

-

14:00

→

15:00

Lab tours and discussions 1h

-

15:00

→

15:30

Coffee Break 30m NBI Canteen

NBI Canteen

-

15:30

→

16:50

Quantum and/or Classical Worlds? Aud A

Aud A

Blegdamsvej 17

2100 Copenhagen Ø DenmarkConvener: Jeffrey Bub (University of Maryland)-

15:30

Bohr’s relational holism and the classical-quantum interaction 40m

In my presentation I will focus on Bohr’s problematic distinction between classical systems and quantum systems. The first thesis that I will discuss is that such a distinction is motivated by the fact that the shared, objective language in which properties of the quantum realm must be described is a condition of possibility to talk about quantum systems. These systems cannot be subject to a scientific analysis based itself on quantum mechanics, since any use of the latter theory presupposes the classical language. The second thesis that I will discuss is an analysis of Bohr’s classical/quantum distinction in terms of the distinction between theories of principle and constructive theories proposed by Einstein in 1919. Finally, I will discuss the various senses in which this distinction might be interpreted as contextual and therefore understood holistically in terms of a peculiar inseparability of the quantum system from the classical apparatus.

Speaker: Mauro Dorato (University of Rome III) -

16:10

What condensed matter physics and statistical physics teach us about the limits of unitary time evolution 40m

The Schrödinger equation for a macroscopic number of particles is linear in the wave function, deterministic, and invariant under time reversal. In contrast, the concepts used and calculations done in statistical physics and condensed matter physics involve stochasticity, nonlinearities, irreversibility, top-down effects, and elements from classical physics. The problems posed by reconciling these approaches to unitary quantum mechanics are of a similar type as the quantum measurement problem. My talk will argue that rather than aiming at reconciling these contrasts one should use them to identify the limits of quantum mechanics. For the simplest macroscopic system, a gas in thermal equilibrium, the length and time scale beyond which unitary time evolution and linear superposition break down are the thermal

wavelength and the thermal time. The reasons for this breakdown are ascribed to the irreversible emission of photons into space and to the uncontrollability of the microscopic state.Speaker: Barbara Drossel (Technische Universitaet Darmstadt)

-

15:30

-

09:00

→

10:20

-

-

09:00

→

12:20

Reference Frames, Relativity and Gravity in QM Aud A

Aud A

Blegdamsvej 17

2100 Copenhagen Ø DenmarkConvener: Eugene Polzik-

09:00

On quantum limits of the idea of knowledge as self-location 40m Aud A

Aud A

Blegdamsvej 17

2100 Copenhagen Ø DenmarkThere is a strong tradition in epistemology that represents an agent's belief and knowledge via the task of self-location within a set of possible worlds. For the case of an agent's beliefs, the idea is, briefly, as follows: There is a set of possible worlds ("centered" complete epistemic possibilities including the agent's current place and time). Only some of those worlds are compatible with the agent's evidence. So via its evidence, the agent has partial information about where it is. The agent believes exactly what is true in all these worlds compatible with the evidence. Acquiring more evidence means to exclude more possibilities and thereby to acquire more beliefs. Ideally, in the limit, an agent can narrow down these possibilities to just one, thereby completely solving the task of self-location and deciding all questions.

While the self-location model has a clear formal structure and a certain appeal, it faces serious difficulties. A well-known issue is that all necessities would have to be known, which is unrealistic. In my talk I will focus on an issue that is more specific to quantum scenarios: The model assumes that the agent is indeed perfectly located in one possible world. On the standard conception of possible worlds together with a linear temporal ordering, this means that all future outcomes of possible experiments must really be fixed for the agent. I will discuss in how far the self-location model thus amounts to an assumption of hidden variables, and what a more realistic representation in terms of branching possibilities could look like.Speaker: Thomas Müller (Universität Konstanz) -

09:40

On the role of gravity in table-top quantum experiments 40m Aud A

Aud A

Blegdamsvej 17

2100 Copenhagen Ø DenmarkI will discuss the challenges and prospects for isolating and exploring gravity as a relevant coupling mechanism in table-top quantum experiments. This includes quantum limits on the metric generated by a quantum source mass and possible schemes to measure it. Experimentally, a central role is played by the possibility to achieve quantum control over motional states of levitated solid-state particles.

Speaker: Markus Aspelmeyer (University of Vienna) -

10:20

Coffee Break 40m NBI Canteen

NBI Canteen

-

11:00

Device-independent snapshots 40m Aud A

Aud A

Blegdamsvej 17

2100 Copenhagen Ø DenmarkThe notion of device-independent certification started in the context of key distribution, and has expanded to several similar tasks. But it has also led to interesting foundational results, notably by looking at quantum correlations as a special case of no-signaling ones, but also in signaling models. My talk will be a series of snapshots devoted to these results.

Speaker: Valerio Scarani (National University of Singapore) -

11:40

Performance of Port-based teleportation 40m Aud A

Aud A

Blegdamsvej 17

2100 Copenhagen Ø DenmarkQuantum teleportation is one of the fundamental building blocks of quantum Shannon theory. While ordinary teleportation is simple and efficient, port-based teleportation (PBT) enables applications such as universal programmable quantum processors, instantaneous non-local quantum computation and attacks on position-based quantum cryptography. In this work, we determine the fundamental limit on the performance of PBT: for arbitrary fixed input dimension and a large number N of ports, the error of the optimal protocol is proportional to the inverse square of N. We also give an improved converse bound of matching order in the number of ports. In addition, we determine the leading-order asymptotics of PBT variants defined in terms of maximally entangled resource states.

This is joint work with Felix Leditzky, Christian Majenz, Graeme Smith, Florian Speelman, Michael Walter, available here: https://arxiv.org/abs/1809.10751

Speaker: Matthias Christandl (University of Copenhagen)

-

09:00

-

12:20

→

13:00

Closing Remarks 40m Aud A

Aud A

Blegdamsvej 17

2100 Copenhagen Ø Denmark -

13:00

→

14:00

Lunch 1h NBI Canteen

NBI Canteen

-

09:00

→

12:20